|

My research interests are centered around Deep Learning, Computer Vision and Object Pose Estimation in particular. I've spent time at CMM of Mines ParisTech and L2S of CentraleSupelec. I received my master degree in Signal and Image Processing from University Paris-Sacaly and my bachelor degree in Optical and Electronic Information from Huazhong University of Science and Technology.(Last update: Nov, 2021) Email / CV / GitHub / Google Scholar / LinkedIn |

|

|

|

|

|

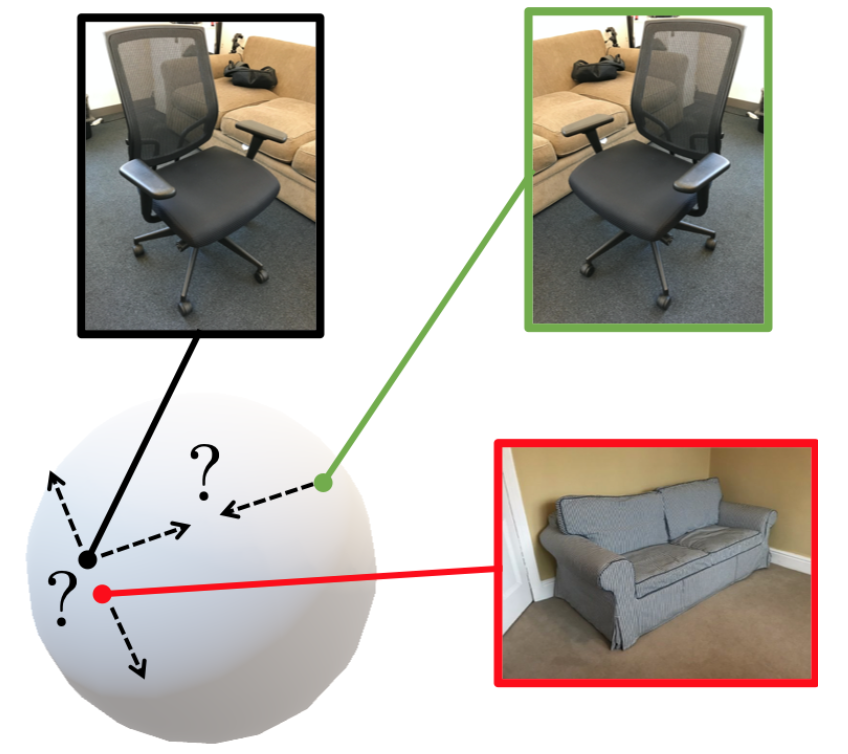

Yang Xiao, Yuming Du, Renaud Marlet 3DV 2021 Oral project page | code We train a direct pose estimator in a class-agnostic way by sharing weights across all object classes, and we introduce a contrastive learning method that has three main ingredients: (i) the use of pre-trained, self-supervised, contrast-based features; (ii) pose-aware data augmentations; (iii) a pose-aware contrastive loss. |

|

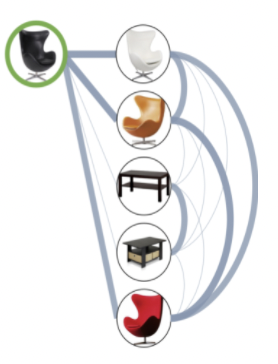

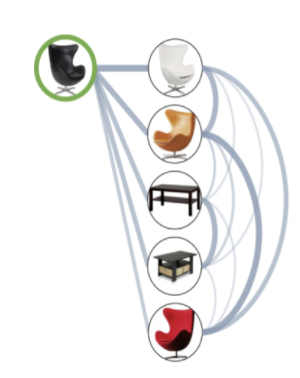

Xi Shen, Yang Xiao, Shell Xu Hu, Othman Sbai, Mathieu Aubry NeurIPS 2021 project page | code Given an initial similarity graph between a query and its top candidates in image retrieval, we propose a module dubbed Subgraph Similarity Refiner (SSR) to improve the similarity graph. |

|

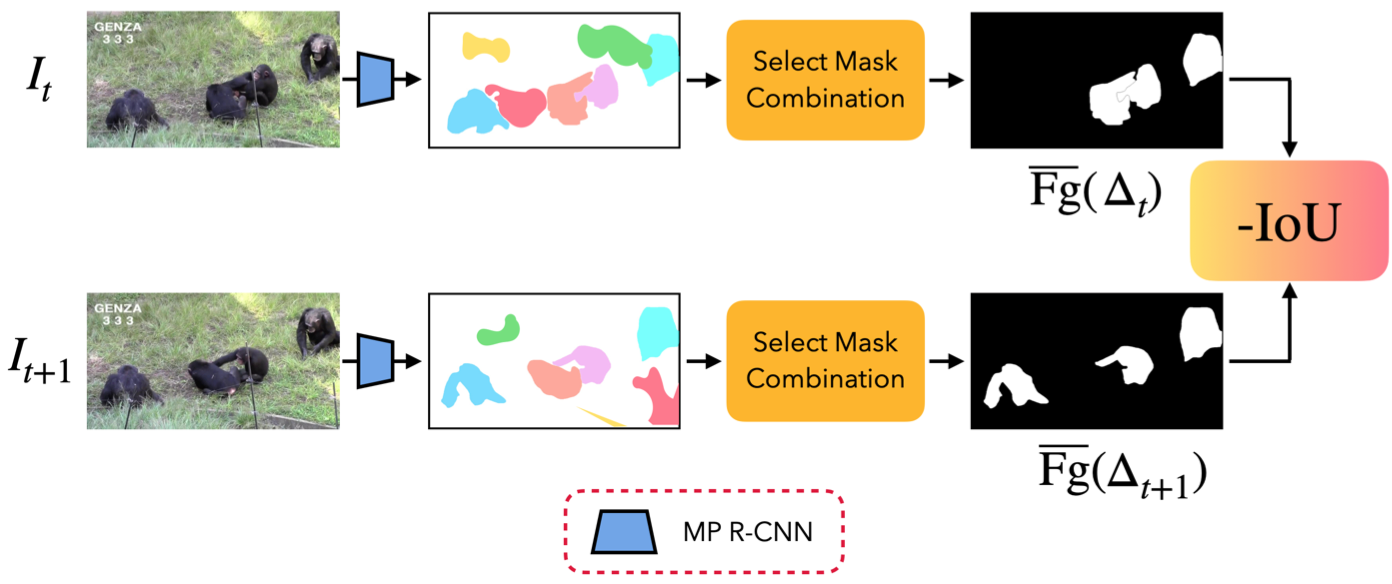

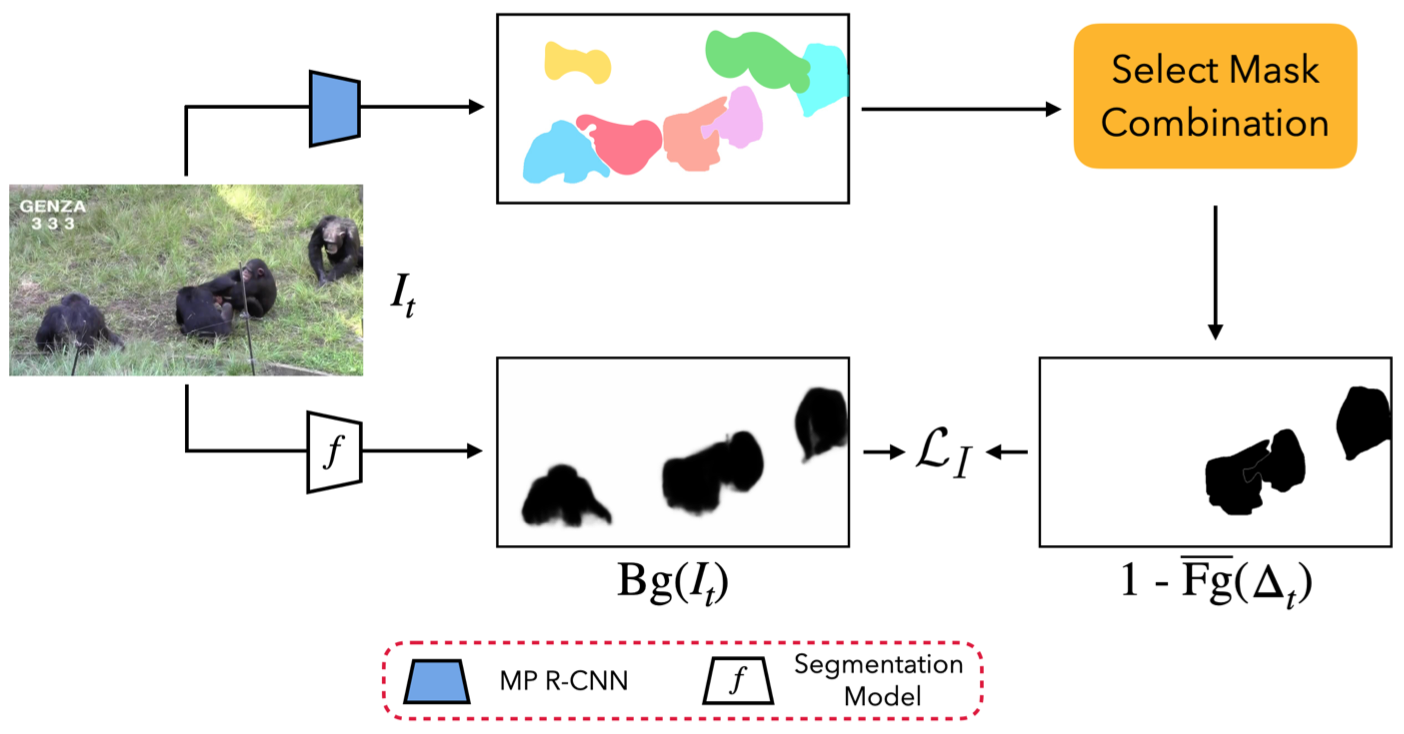

Yuming Du Yang Xiao, Vincent Lepetit ICCV 2021 project page | code We explore the use of unlabeled videos to improve the performance of instance segmentation model on unseen classes, and propose a global optimization approach for video-based object discovery. |

|

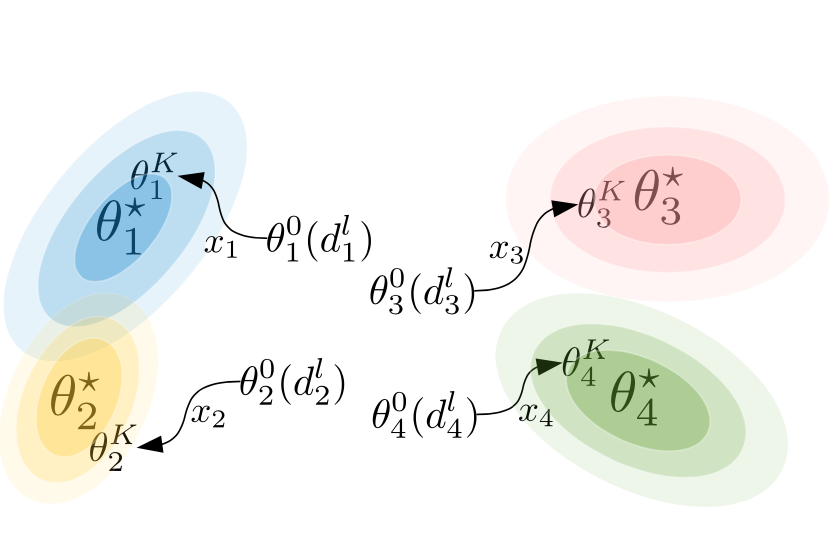

Yang Xiao, Renaud Marlet ECCV 2020 project page | code (detection) | code (viewpoint) We popose a simple yet effective framework for few-shot object detection and few-shot viewpoint estimation that outperforms state-of-the-art methods on both tasks. |

|

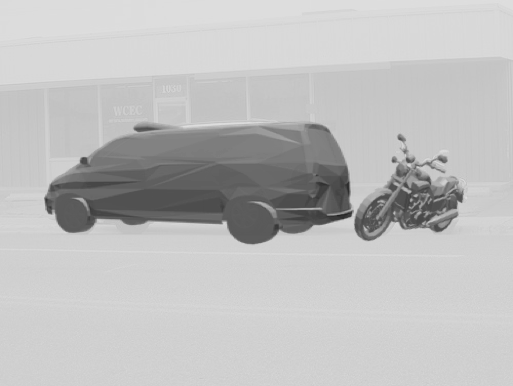

Xuchong Qiu, Yang Xiao, Chaohui Wang, Renaud Marlet ECCV 2020 Spotlight project page | code Sharper occlusion boundaries and better depth maps from color images. |

|

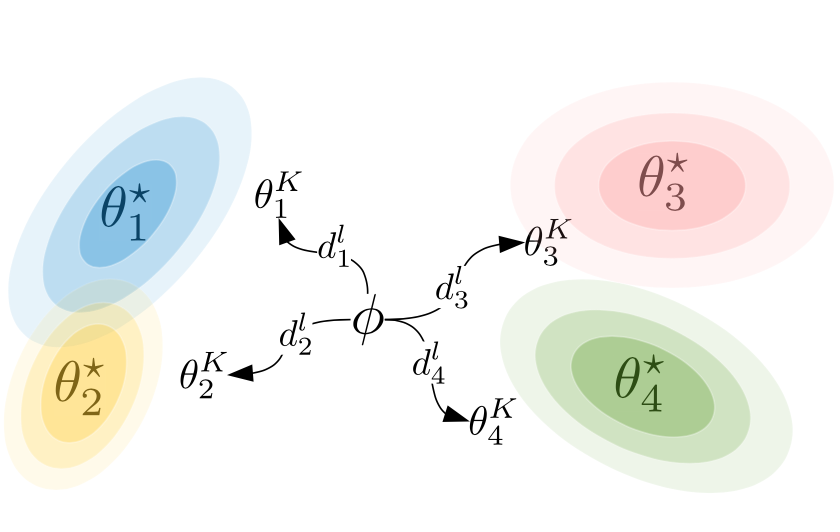

Shell Xu Hu, Xi Shen, Yang Xiao, Pablo Moreno, Neil Lawrence, Guillaume Obozinski, Andreas Damianou ICLR 2020 code We revisit the hierarchical Bayes and empirical Bayes formulations for multi-task learning, which can naturally be applied to meta-learning. |

|

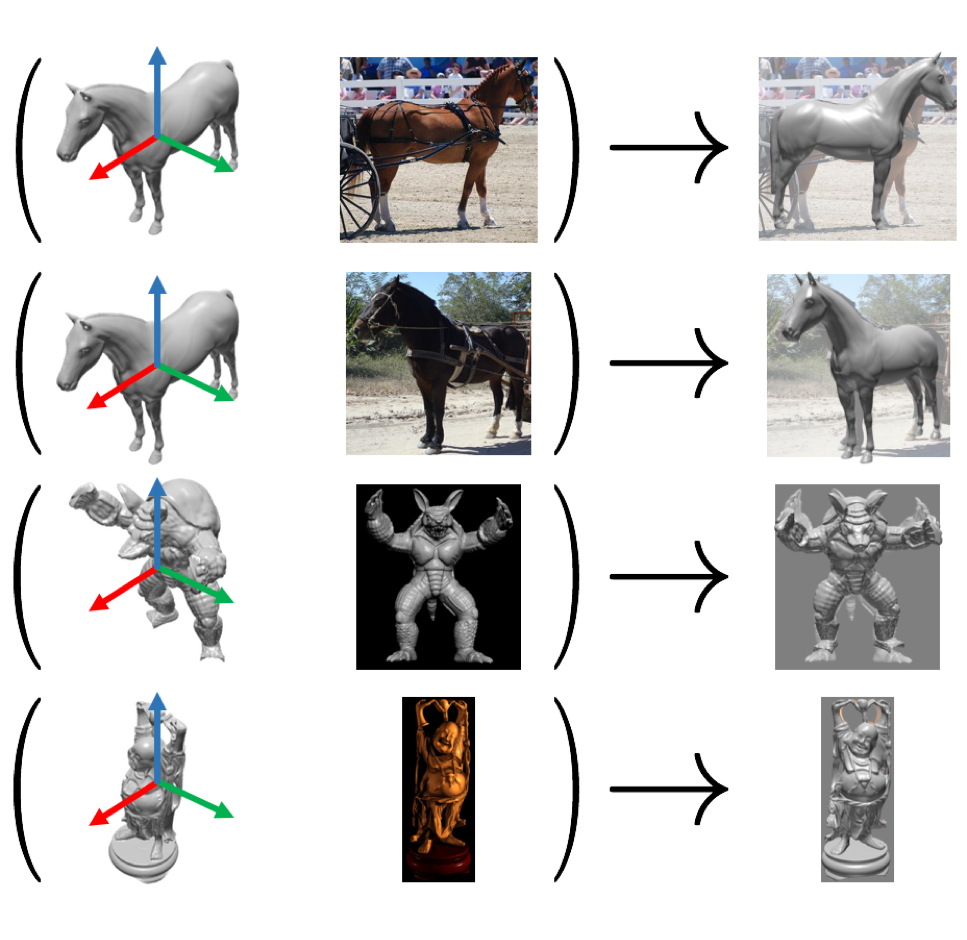

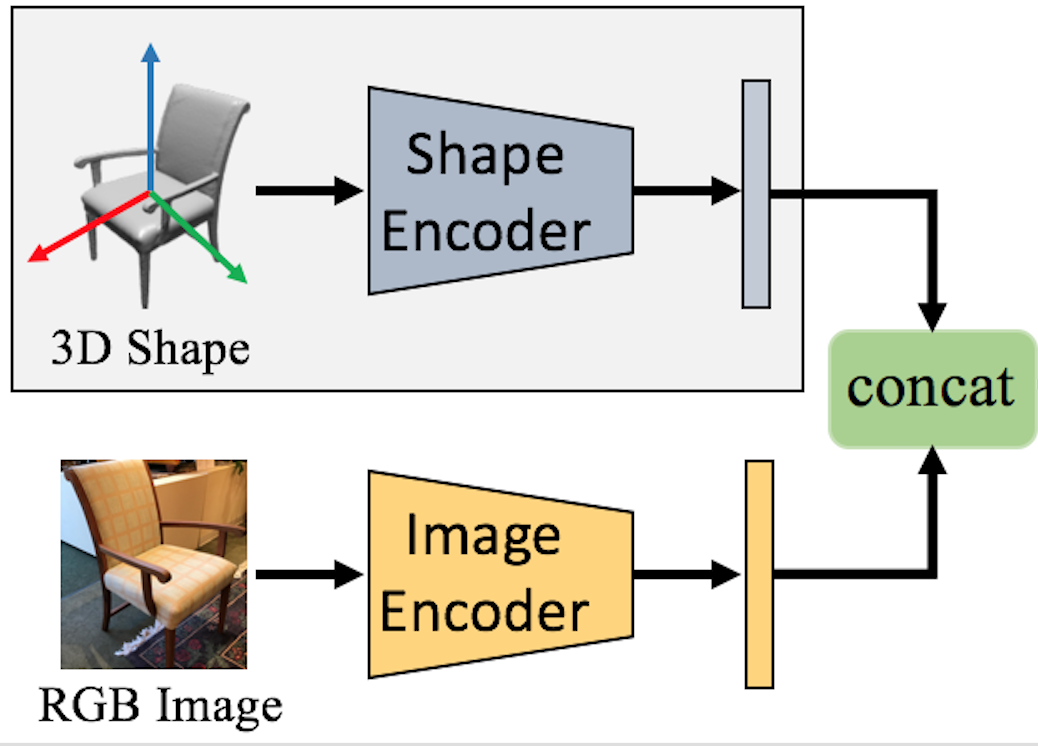

Yang Xiao, Xuchong Qiu, Pierre-Alain Langlois, Mathieu Aubry, Renaud Marlet BMVC 2019 project page | code By using image and cad model at network input, and pose-aware data augmentation, we improve object pose estimation generalization ability towards arbitrary objects (seen / unseen). |

|

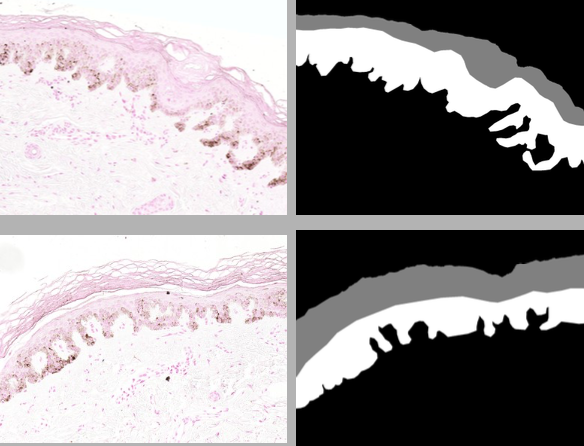

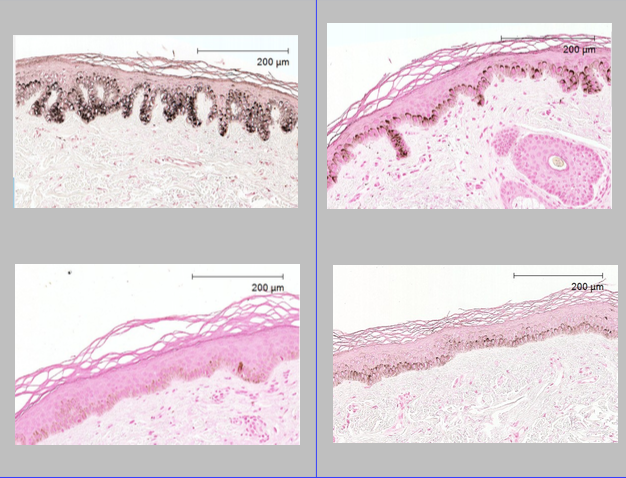

Yang Xiao, Etienne Decencière, Santiago Velasco-Forero, Hélène Burdin, Thomas Bornschlögl, Françoise Bernerd, Emilie Warrick, Thérèse Baldeweck ISBI 2019 Color transfer between training samples could improve performance in the segmentation of histological images of human skin while the labeled data is not sufficient. |

|

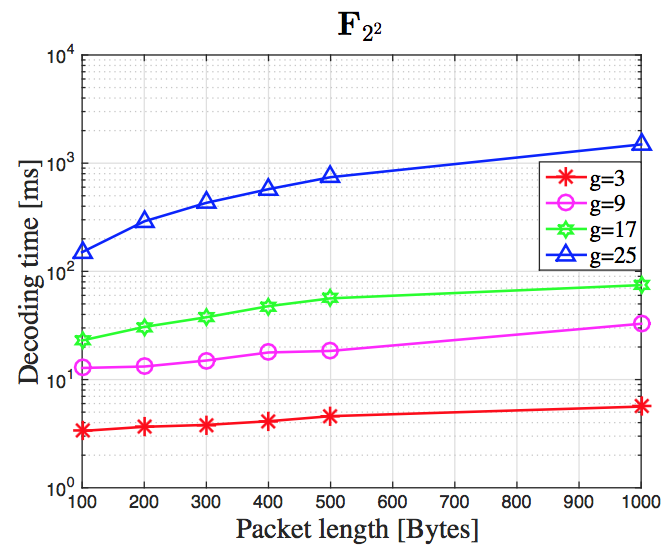

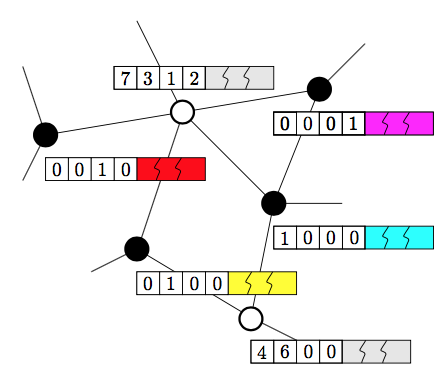

Qiuyi Wang, Yang Xiao, Michel Kieffer, Cedric Adjih ICASSP 2018 Network decoding could be achieved by exploiting the structure imposed by the communication protocol on the packet headers. |

|

|

|

Reviewing for NeurIPS, ICLR. |

|

Teaching Assistance for Computer Vision at ENPC (Spring 2019, Spring 2021). |

|

|

|

Machine vision for robotic manipulators

Initiated in 2018, DiXite is part of I-SITE FUTURE, a French initiative to answer the challenges of the sustainable city. The DiXite project focuses on the construction site. It acts as a hub for developing pluridisciplinar research around construction scenarios in which automated and digitised processes are used to construct and maintain the city of tomorrow. |

|

This website takes the template from here.

|